Most Kubernetes manifests start their life as a copy-paste. Someone, somewhere, picked numbers that looked safe:

resources:

requests:

cpu: "1000m"

memory: "2Gi"

limits:

cpu: "2000m"

memory: "4Gi"Those numbers ship. They never get revisited. The deployment runs, pagers stay quiet, job done.

What's actually happening is that the pod has reserved 1 full CPU core and 2 GiB of memory on whichever node it lands on — whether it uses them or not. Multiply that across dozens of services, three environments, and a Prometheus instance with 2Gi of headroom it never touches, and you're paying for capacity that mostly sits idle. You're also burning electricity, and that electricity has a carbon cost.

This post is about how to find better numbers — using an open-source tool called Goldilocks plus the Vertical Pod Autoscaler — and why it's worth doing for reasons beyond your cloud bill.

A 60-second primer on requests and limits

If you live and breathe Kubernetes, skip this section. If you don't:

requestsare the resources your pod is guaranteed by the scheduler. When Kubernetes places your pod on a node, it subtracts your request from that node's available capacity. Two pods each requesting 1 CPU on a 2-CPU node fill it up — no more pods can be scheduled there.limitsare the ceiling. The pod can use up to its limit, no further. Exceeding the memory limit gets the pod killed (the dreaded OOMKilled); exceeding the CPU limit causes throttling.

The crucial bit: requests reserve capacity even when the pod is idle. A pod that requests 1 CPU but uses 50m on average has effectively claimed a CPU it isn't using. The node can't hand it to anyone else. That's the waste we're after.

Why this is a sustainability problem, not just a cost one

There's a fairly direct chain between idle Kubernetes capacity and carbon emissions:

- Reserved-but-idle CPU and RAM keep nodes alive. A modern server's idle power draw is significantly lower than its fully-loaded draw, but it's far from zero. The idle floor is a big chunk of the curve.

- Over-provisioned requests force more nodes. If every pod claims 2× what it needs, your cluster autoscaler spins up 2× the nodes to fit them. Each extra node has its own idle draw — plus the embodied carbon of the hardware it runs on, amortised over its life.

- Idle servers still need cooling. Data center PUE (Power Usage Effectiveness) numbers mean every watt of compute is roughly 1.1–1.5W of total facility draw, the difference being mostly cooling and power conversion losses.

FinOps and GreenOps point at the same waste from different angles. Your unused 2Gi of memory is both money and CO₂. The pleasant part: the fix is the same fix.

Enter Goldilocks

Goldilocks is an open-source tool from Fairwinds. It runs the Vertical Pod Autoscaler (VPA) in recommendation mode: VPA observes your pods' real CPU and memory usage over time and computes what they'd ideally request, but doesn't change anything automatically. Goldilocks wraps this in a dashboard that shows the recommendations per workload, side by side.

Recommendation mode is the important part: there's no risk. You install it, label the namespaces you care about, let it watch, then read off the numbers. Nothing automatically rewrites your deployments. You stay in the loop.

The walk-through

Assume you've got a cluster and kubectl access.

1. Install metrics-server (if you don't have it already):

helm repo add metrics-server https://kubernetes-sigs.github.io/metrics-server/

helm upgrade --install metrics-server metrics-server/metrics-server \

-n metrics-server --create-namespace \

--set=args={'--kubelet-insecure-tls'}2. Install VPA (if it's not already there):

kubectl get po -A | grep vert # check first

helm repo add cowboysysop https://cowboysysop.github.io/charts/

helm upgrade --install vertical-pod-autoscaler -n vpa --create-namespace \

cowboysysop/vertical-pod-autoscaler3. Install Goldilocks:

helm repo add fairwinds-stable https://charts.fairwinds.com/stable

helm upgrade --install goldilocks -n goldilocks --create-namespace \

fairwinds-stable/goldilocks4. Open the dashboard:

kubectl -n goldilocks port-forward svc/goldilocks-dashboard 8080:80

# Then visit http://127.0.0.1:80805. Label the namespaces you want to analyse. This is the bit that switches Goldilocks "on" for a workload — it only generates recommendations for namespaces it's been told to watch:

kubectl label ns my-namespace goldilocks.fairwinds.com/enabled=true

# or for several at once:

kubectl label ns frontend backend api goldilocks.fairwinds.com/enabled=true6. Wait. A few hours minimum, ideally a day, ideally during a representative workload. If your traffic is spiky, run a load test in the window. The longer the observation period, the better the recommendation.

7. Read the recommendations. The dashboard shows, per container, suggested requests and limits under two profiles: Guaranteed and Burstable. These are Kubernetes Quality of Service (QoS) classes — which one to pick depends on the workload. More on that next.

A short detour: which QoS class?

Kubernetes assigns every pod one of three QoS classes, based on how you set requests and limits:

- Guaranteed — every container has

requestsset equal tolimits, for both CPU and memory. The pod has reserved exactly what it needs and can never use more. - Burstable — at least one container has a request set, but requests and limits aren't equal (or only one of them is set). The pod is guaranteed its requests, and can burst up to its limits when spare capacity is available.

- BestEffort — neither requests nor limits set. The pod takes whatever's going.

The class matters most when the cluster is under pressure. When a node runs low on memory, the kubelet evicts pods in roughly this order: BestEffort first, then Burstable pods that are exceeding their request, then Burstable pods within their request, and Guaranteed pods last. Guaranteed pods are the most protected. CPU pressure works differently — pods are throttled at their CPU limit rather than evicted — but the same hierarchy of "who gets squeezed first" applies.

So which to pick:

- Guaranteed — for latency-sensitive or stateful workloads where unpredictable performance is a real operational problem: databases, primary API services, anything where a sudden eviction or throttle would page someone. The cost: you reserve the worst-case footprint full-time, even when traffic is light. Less efficient bin-packing, more nodes running, more idle draw.

- Burstable — for most stateless services. You reserve a sensible request based on typical usage, and let the limit absorb spikes. From a sustainability angle this is usually the right default: your cluster packs more pods per node, the autoscaler runs leaner, and you only "pay" — in capacity, in cost, in carbon — for what your workload typically uses.

- BestEffort — in theory the most efficient, since you reserve nothing. In practice we almost never use it: a BestEffort pod is the first thing evicted when a node gets cranky, often at exactly the moment you'd want the workload to keep running. Possibly a fit for short, retryable batch jobs. For anything user-facing, no.

A useful default: start with Burstable, escalate to Guaranteed only when you have a concrete reason. Concrete reasons being either "this workload has tight latency requirements" or "we've seen it get throttled or OOM-killed under realistic load." Don't pre-emptively put everything on Guaranteed — you'll burn capacity (and carbon) on headroom that never gets used.

A real example

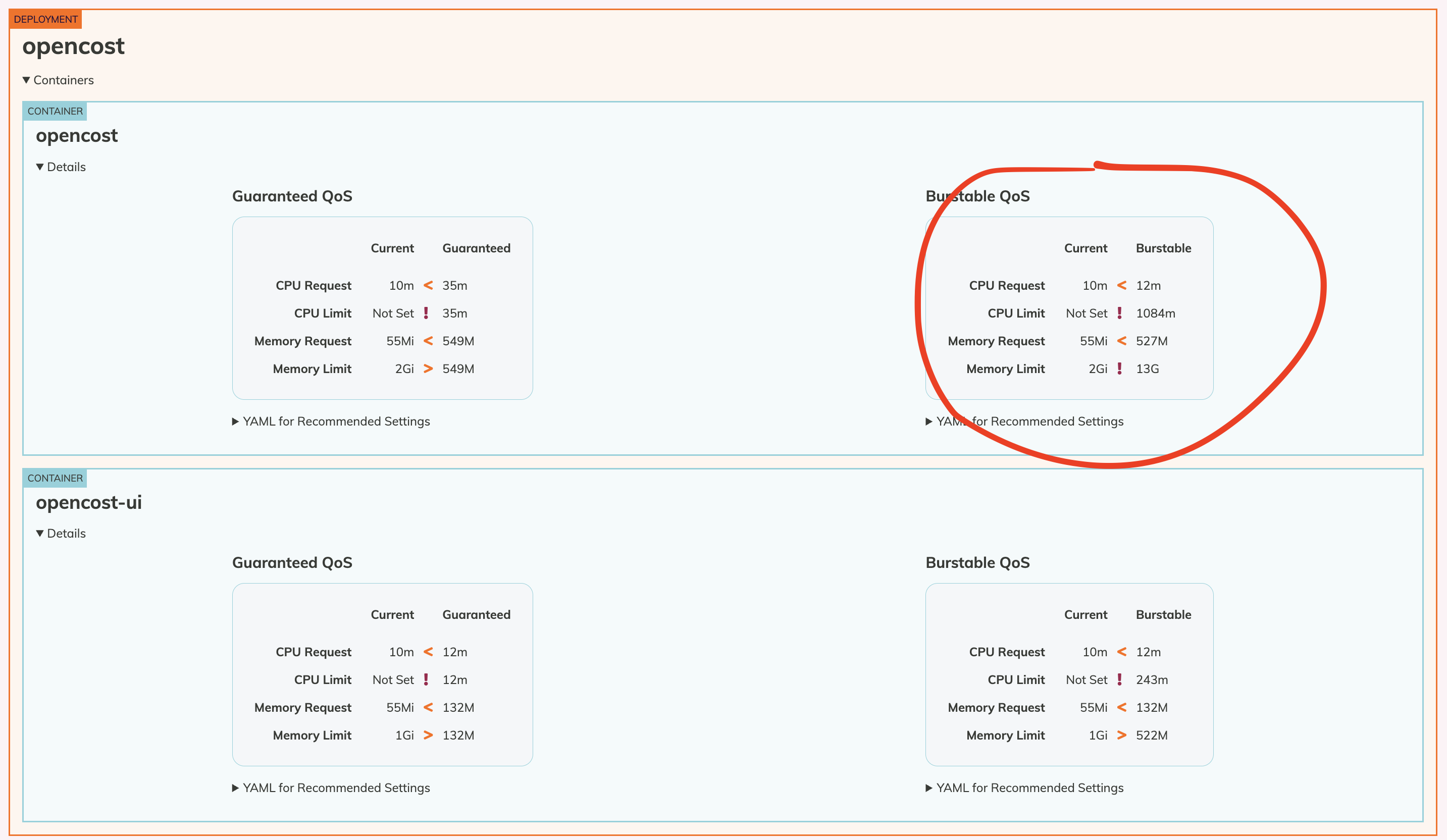

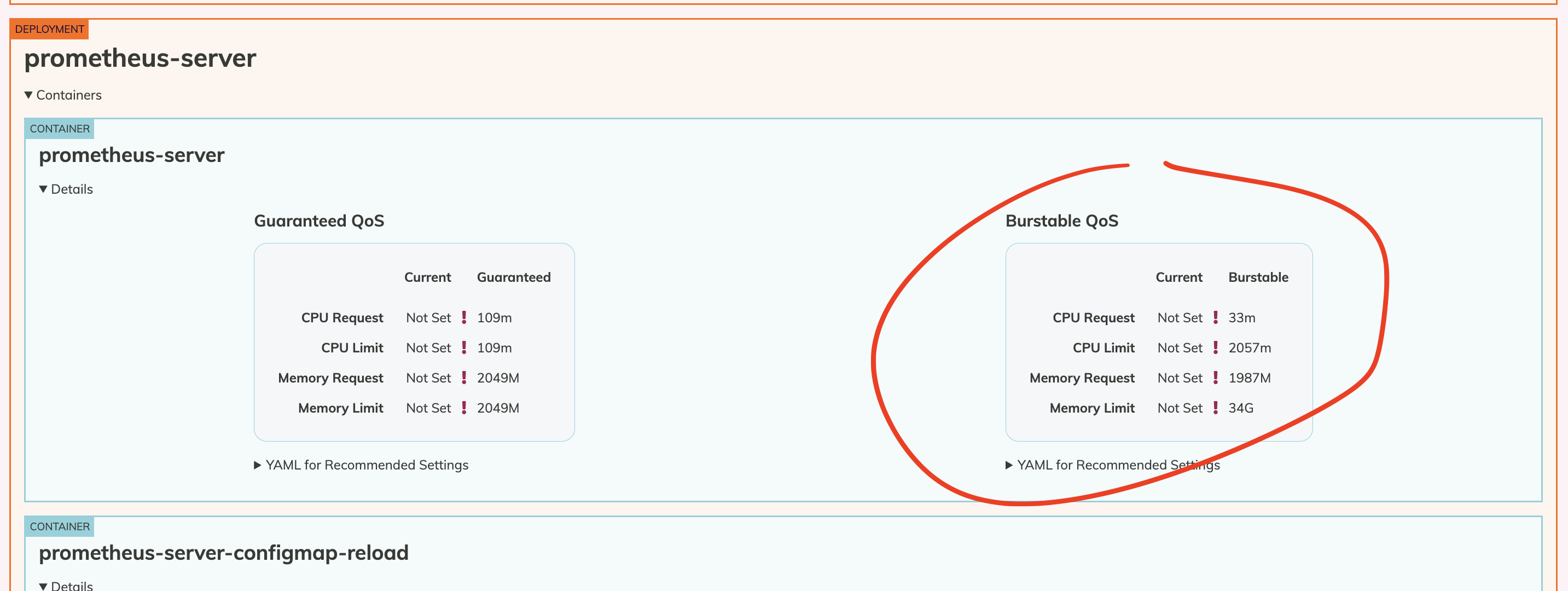

We ran this exercise on a client account for two workloads we suspected were over-provisioned: Opencost and Prometheus. After a day of observation, here's what Goldilocks suggested. We picked Burstable for both, because their resource use varies.

Goldilocks dashboard for the opencost deployment, with our chosen Burstable column circled.

And the same view for prometheus-server.

Translated to YAML:

# opencost

resources:

requests:

cpu: "12m"

memory: "520Mi"

limits:

cpu: "700m"

memory: "11Gi"

# opencost-ui

resources:

requests:

cpu: "12m"

memory: "130Mi"

limits:

cpu: "200m"

memory: "430Mi"# prometheus-server

resources:

requests:

cpu: 33m

memory: 2000Mi

limits:

cpu: 2060m

memory: 30GiLook at those requests: 12 milliCPU — roughly 1% of a CPU core. The original deployments had been requesting hundreds of times that, blocking other workloads from being scheduled on the same node.

You can also read the same data straight from VPA if you prefer the CLI:

kubectl describe vpa -A

# ...

# Recommendation:

# Container Recommendations:

# Container Name: opencost

# Target:

# Cpu: 35m

# Memory: 476450463The Target line is what Goldilocks rounds and presents in the UI.

Clean up

If Goldilocks was a one-off tuning exercise, take it back out:

kubectl label ns opencost goldilocks.fairwinds.com/enabled-

kubectl label ns prometheus-system goldilocks.fairwinds.com/enabled-

helm uninstall goldilocks -n goldilocks

kubectl delete namespace goldilocksKeep metrics-server running — it's useful well beyond this exercise. VPA in recommendation mode is also cheap to leave installed if you'd like ongoing visibility.

So what's the actual impact?

For a single workload, modest. For a cluster-wide right-sizing pass, the math gets interesting. Right-sizing typically lets you:

- Pack more pods onto fewer nodes, so the cluster autoscaler keeps fewer machines running.

- Avoid spinning up extra nodes during normal scaling events.

- In environments with off-hours scaling, drop your "minimum capacity" floor lower than you'd dare otherwise.

The carbon impact lives downstream of the cost impact. The relationship isn't 1:1 — emissions also depend on grid mix at your region, time of day, and node utilisation patterns — but if your cluster cost drops by a meaningful fraction, your emissions drop in roughly the same ballpark.

The bit I keep coming back to: this is one of the rare optimisations where the cost case, the performance case (better bin-packing means less scheduling pressure), and the sustainability case all point the same way. There's no tradeoff to negotiate. Just a dashboard to read.

Where to next

Right-sizing is the foundation. Once your running workloads are honest about what they need, the next question is whether they need to be running at all, all the time. In a follow-up post I'll cover using KEDA to switch off lower environments outside working hours — which, for most teams, is the single biggest sustainability lever sitting unused in a cluster.